|

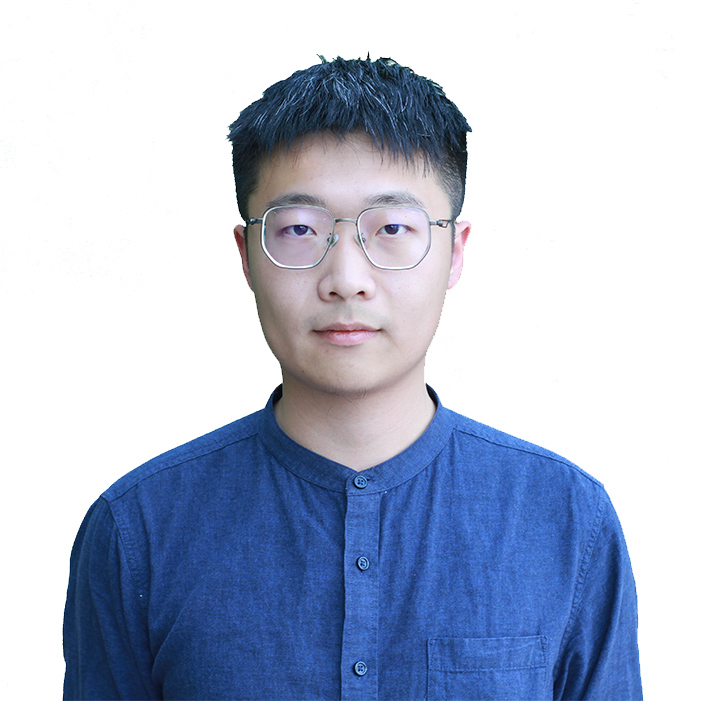

| Speaker: Bolin Shen

Date: March 3, 11:45 – 12:45 pm Abstract: Small language models have recently gained increasing attention due to their strong cost efficiency, computational efficiency, and competitive performance across a wide range of reasoning tasks. However, when reasoning inputs contain irrelevant context, SLMS are substantially more vulnerable to distraction than large language models, leading to severe performance degradation. Through a fine grained analysis of inference time attention, we identify a severe underlying issue: SLMS exhibit a markedly weaker ability to distinguish relevant context from irrelevant context, as evidenced by consistently lower AUROC scores when treating attention over RC and IC as a binary discrimination problem. As a result, SLMS often assign high attention to distracting tokens in irrelevant context and erroneously incorporate them as evidence during reasoning, which disrupts effective evidence aggregation. To address this limitation, we propose ATOMIC. Our approach first performs task conditioned context level filtering to suppress irrelevant information, then introduces an Evidence Likelihood Score to amplify attention on critical evidence tokens within relevant context, and finally reallocates attention during answer inference to further reinforce key evidence. Extensive experiments across multiple mathematical reasoning benchmarks demonstrate that our method achieves state of the art performance. Biographical Sketch I am a first-year Ph.D. student in Computer Science at Florida State University, where I am fortunate to work under the supervision of Prof. Yushun Dong. Before that, I completed my master’s degree at the University of Michigan Ann Arbor. My research mainly focus on strengthening Small Language Models (SLMs), especially by improving their memory mechanisms and reasoning abilities to achieve efficient yet powerful intelligence. In parallel, I investigate Graph Neural Networks (GNNs) with an emphasis on model extraction attacks and defenses mechanisms. Ultimately, my goal is to build secure, robust, and trustworthy machine learning systems that contribute to the broader vision of Responsible AI. Location(In Person Only): LOV 353 |

Search FSU

Close SearchDEPARTMENT OF COMPUTER SCIENCE

College of Arts and Sciences